March 24, 2026

Can Cosmos Predict2.5 Predict Physically Realistic Scenes by Isaac Sim?

Testing NVIDIA's Cosmos Predict2.5 video generation model on physics-based simulations from Isaac Sim.

Overview

Video generation models have made remarkable progress, but can they accurately predict physics? In this research post, we evaluate NVIDIA Cosmos Predict2.5 on its ability to generate physically realistic bouncing ball simulations, comparing its outputs against ground truth physics simulations from Isaac Sim.

Test Methodology

Infrastructure Setup

We conducted our experiments using a cloud GPU infrastructure:

- Platform: RunPod GPU cloud

- GPU: NVIDIA RTX 6000 Ada (48GB VRAM)

- Model: Cosmos Predict2.5-2B (Video2World)

- Dataset: Custom Isaac Sim bouncing balls dataset

Dataset Generation

The ground truth dataset was generated using NVIDIA Isaac Sim with precise physics parameters:

| Parameter | Value |

|---|---|

| Physics Engine | PhysX 5 |

| Gravity | -9.81 m/s² |

| Ball Restitution | 0.65 - 0.89 |

| Frame Rate | 16 FPS |

| Resolution | 512×512 |

Each scene consists of 5 input frames (conditioning) and 80+ ground truth frames showing the physics simulation.

Inference Configuration

For Cosmos Predict2.5 inference, we used the following settings:

- Guidance Scale: 7.0 (higher values enforce stronger prompt adherence)

- Inference Steps: 35

- Output Resolution: 848×480

- Conditioning: First 5 frames from Isaac Sim

Prompt Engineering Deep Dive: Scene 0000

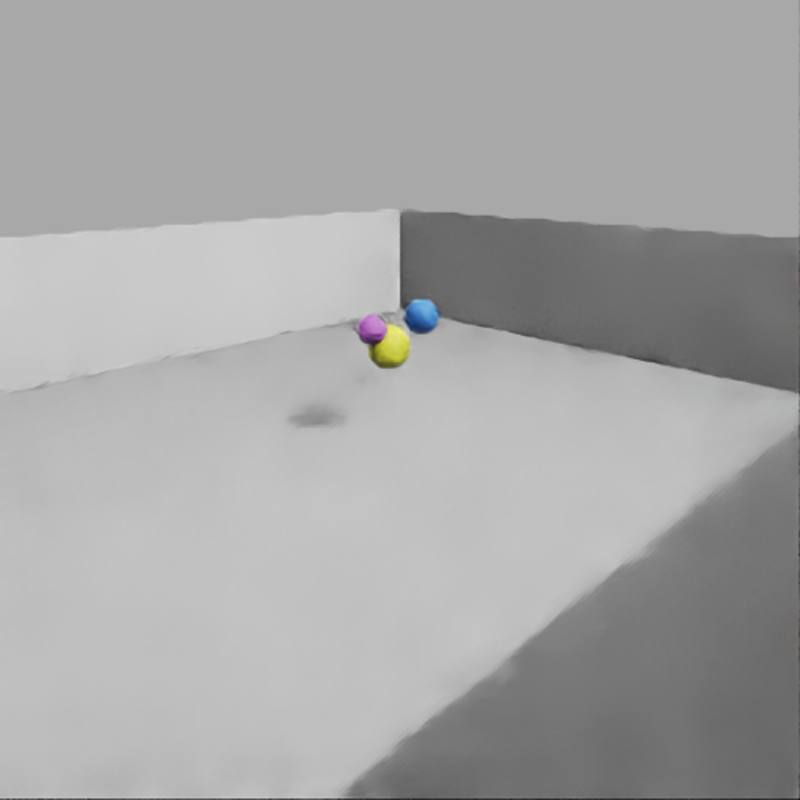

Our first test case involved three colored balls (purple, yellow, blue) falling and bouncing. We iteratively refined our prompts to achieve better results.

Each panel is labeled with its source. 2×2 grid layout: Initial Frame (top-left), Isaac Sim Ground Truth (top-right), Cosmos Predict v2 (bottom-left), Cosmos Predict v3 (bottom-right).

Prompt Evolution

Version 1: Basic Prompt

"A photorealistic scene of bouncing balls falling and bouncing on the ground"

Result: Started correctly but suffered from scene drift — additional balls appeared mid-video.

Version 2: Temporal Consistency Focus

"Continue the motion of exactly three colored spheres falling and bouncing:

one purple ball, one yellow ball, and one blue ball. These are the only

three objects in the scene. Maintain temporal consistency..."

Result: Successfully maintained 3 balls but they became completely static — no falling or bouncing motion.

Version 3: Motion + Consistency Balance

"Continue the dynamic motion of exactly three colored spheres - one purple,

one yellow, and one blue - as they fall downward and bounce on the ground.

The balls are actively falling due to gravity and bouncing with realistic

physics. Maintain continuous motion..."

Result: Better balance between object consistency and dynamic motion.

Key Learnings

- Action verbs matter: Words like “falling,” “bouncing,” “hitting” are critical for generating motion

- Over-constraining freezes motion: Too much emphasis on “maintain consistency” can freeze the physics entirely

- Order matters: Placing motion descriptions before consistency constraints produced better results

Multi-Scene Analysis

We extended our evaluation to scenes with different object counts to test generalization.

Scene 0001: Single Ball (Yellow)

Three-column comparison with labeled panels: Initial Frame (left), Isaac Sim Ground Truth showing 1 yellow ball (center), Cosmos Predict v3 output (right).

Prompt Used:

"Continue the dynamic motion of exactly one yellow sphere as it falls

downward and bounces on the ground. The ball is actively falling due

to gravity and bouncing with realistic physics..."

Scene 0002: Two Balls (Red and Yellow)

Three-column comparison with labeled panels: Initial Frame (left), Isaac Sim Ground Truth showing 2 balls - red and yellow (center), Cosmos Predict v3 output (right).

Prompt Used:

"Continue the dynamic motion of exactly two colored spheres - one red

and one yellow - as they fall downward and bounce on the ground. The

balls are actively falling due to gravity and bouncing with realistic

physics..."

Why Physics Prediction is Hard

Our experiments reveal fundamental challenges in using video generation models for physics prediction:

1. Training Data Distribution Mismatch

Cosmos Predict2.5 was trained primarily on natural videos, not physics simulations. The model hasn’t learned the precise mathematical relationships governing:

- Projectile motion under gravity

- Elastic collision dynamics

- Energy conservation and dissipation

2. Temporal Consistency vs. Physical Accuracy

There’s an inherent tension between:

- Visual coherence: Keeping objects consistent frame-to-frame

- Physical accuracy: Following correct trajectories and collision responses

The model often sacrifices one for the other.

3. Prompt Sensitivity

Small changes in prompt wording dramatically affect output:

- “Maintain consistency” → Objects freeze

- “Continue motion” → Objects move but may drift

- Finding the right balance requires extensive iteration

4. No Explicit Physics Engine

Unlike Isaac Sim which uses PhysX for deterministic simulation, Cosmos Predict2.5 relies on learned priors from training data. It cannot:

- Calculate exact bounce heights based on restitution coefficients

- Model precise collision timing

- Ensure energy conservation

Future Research Directions

Based on these findings, we plan to explore:

- Physics-Conditioned Fine-Tuning: Training on synthetic physics datasets to improve physical accuracy

- Hybrid Approaches: Combining physics engines with generative models for guided synthesis

- Evaluation Metrics: Developing quantitative metrics for physics accuracy beyond visual quality

- Different Model Architectures: Testing physics-aware video models and world simulators

Conclusion

While Cosmos Predict2.5 produces visually impressive results, it struggles with physically accurate predictions. The model treats physics as a visual pattern rather than a mathematical system. For applications requiring precise physics simulation, traditional simulation engines remain essential.

However, video generation models show promise for:

- Rapid prototyping and visualization

- Augmenting training data for robotics

- Scenarios where approximate physics is acceptable

The gap between “looks physical” and “is physically accurate” represents an exciting frontier for future research in world models and video generation.

Experiments conducted March 2026. GPU infrastructure provided by RunPod. Isaac Sim dataset generated using NVIDIA Omniverse.